Years ago I worked on a system where database utilization was a known problem. We wanted to do efficiency work on it, but over and over again we deprioritized that work in favor of shipping features and growing faster. Until eventually we were on the largest instance GCP offered and there was nowhere left to scale up. The threat of actual downtime was what it finally took to get the efficiency work done.

I think inference usage is heading toward a similar reckoning. Your roadmap probably assumes inference capacity will be there when you need it. If that assumption breaks, efficient AI use isn’t just about cost. It determines whether your product can keep growing.

Inference workloads are growing fast

McKinsey projects inference workloads growing at a 35% CAGR through 2030, eventually representing 30 to 40 percent of total data center demand. Deloitte's 2026 TMT Predictions put the inference-optimized chip market at over $50 billion this year alone. Every company shipping AI features is running a continuous compute workload, whether they think of it that way or not.

Agentic AI multiplies inference demand

A chatbot is one inference call, maybe two. An agentic system does plan, retrieve, reason, act, verify, retry, with each step hitting the model again. NVIDIA's research on reasoning agents found that a full chain-of-thought pass can require up to 100x more compute than a single-shot reply. Even in less extreme cases, agentic coding tools like Claude Code routinely consume tens of thousands of tokens per session. Multiply that across millions of users and you're looking at a fundamentally different consumption profile.

LLM inference costs are dropping fast

There's a countervailing force worth mentioning. Models are getting dramatically more capable per token every year. GPT-4 launched in March 2023 at $30/$60 per million tokens. Today, GPT-5 delivers substantially better results at $1.25/$10, a 96% drop on input. Google's Gemini 2.5 Flash-Lite runs at $0.10/$0.40. Anthropic's Haiku 4.5 sits at $1/$5, handling tasks that would have required Opus-class models a year ago. This is real efficiency progress and it matters.

Cheaper models, more usage

But there's a catch. As models get cheaper and smarter, people use them for more things. Tasks that weren't worth automating at $30 per million tokens become obvious at $0.10. Features that were too slow with a reasoning chain become viable with faster inference. The Jevons paradox — where efficiency gains increase total consumption rather than reduce it — is alive and well in AI inference.

Why is AI inference supply constrained?

So demand is growing fast. What about supply?

Multiple parts of the AI infrastructure supply chain are showing strain.

Memory. AI accelerators depend on high-bandwidth memory. AI workloads could effectively consume around 20% of global DRAM wafer capacity by 2026 once you account for the fact that HBM uses 4x the wafer area of standard DRAM. DRAM contract prices surged 50 to 70% in early 2026. Memory has historically been one of the hardest semiconductor segments to scale quickly.

GPUs. AI demand is outpacing GPU supply. Some high-end GPU cloud instances saw price increases of roughly 15% in early 2026. Meta is building multiple generations of custom inference chips through 2027 just to keep up with its own workloads. Hyperscalers don't invest in proprietary silicon for fun. They do it when demand significantly exceeds supply.

Power. Even if chips are available, data centers may not have the electricity to run them. Data centers could account for nearly half of new U.S. electricity demand growth through 2030. Some analysts describe an emerging "AI power wall" where grid capacity prevents deployment of additional infrastructure.

Supply may grow 5–10x between now and 2028. Sounds impressive until you realize demand is expanding simultaneously across inference adoption, agentic systems, reasoning models, and enterprise automation. The industry is likely to experience periodic capacity shocks as demand temporarily exceeds infrastructure.

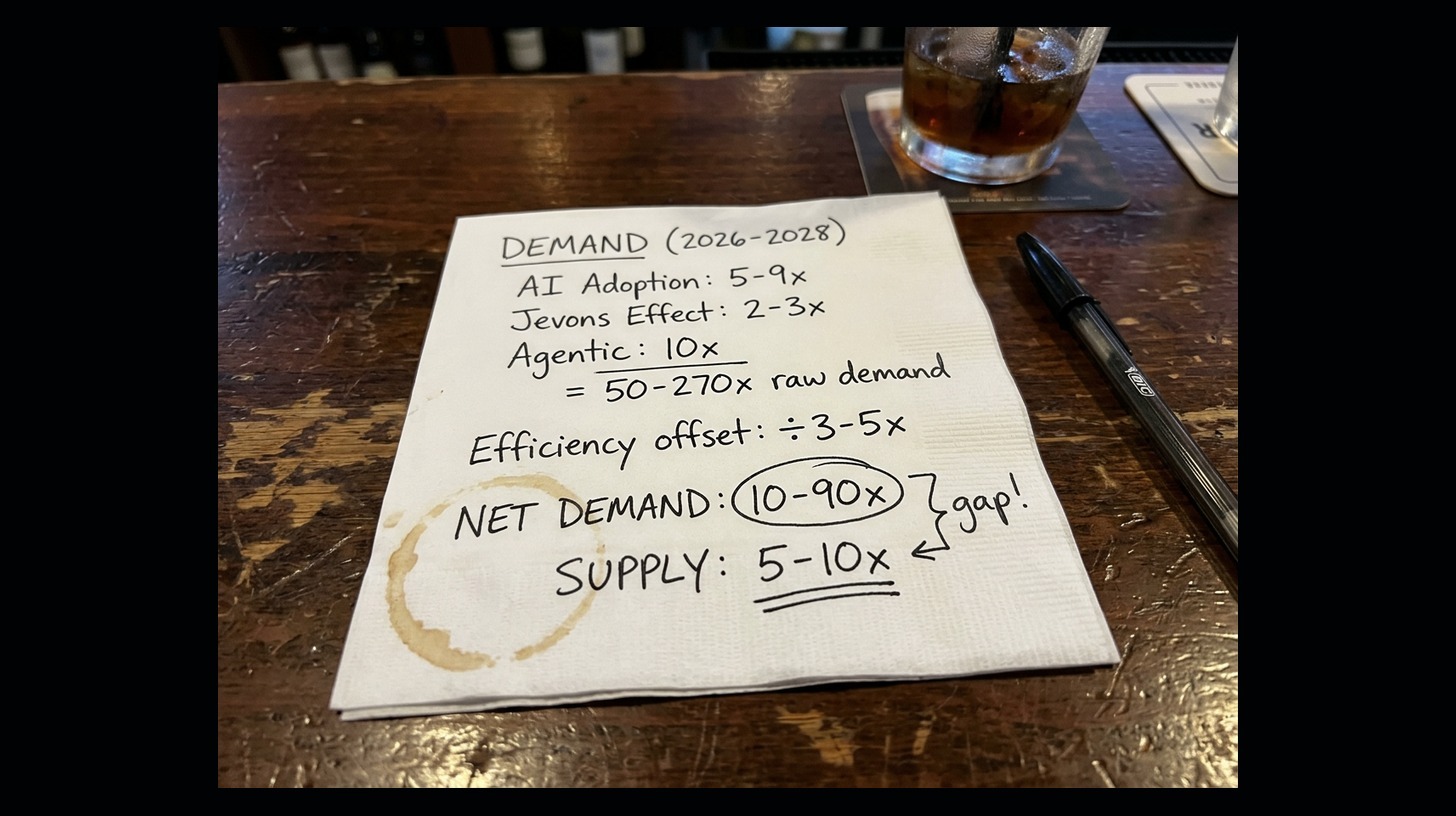

Back-of-the-napkin math

So let's put some rough numbers on the next 18 months.

Demand growth:

| Factor | What we're seeing | 2026–2028 multiplier |

|---|---|---|

| Organic AI adoption (new apps, new users, enterprise rollout) | ~2–3x per year | ~5–9x |

| Jevons effect (cheaper models unlock new use cases) | Tasks not worth doing at $30/M tokens become obvious at $0.10/M | ~2–3x on top of organic |

| Agentic workloads (tokens per task vs. chat) | 5–25x more tokens per session | ~10x (blended) |

Efficiency gains (reduce effective demand):

| Factor | What we're seeing | 2026–2028 multiplier |

|---|---|---|

| Cheaper models matching prior-gen quality | Haiku 4.5 handles last year's Sonnet tasks; GPT-5-nano does GPT-4 work | ~2–3x more work per dollar |

| Token price drops at the same tier | GPT-4 → GPT-5: ~24x over 3 years; Gemini Flash-Lite at $0.10/M | ~2–3x over 18 months |

| Better instruction following (fewer tokens needed) | Less prompting, shorter system messages, tighter outputs | ~1.2–1.5x |

Combined efficiency offset: roughly 3–5x (these overlap, since switching to a cheaper model already captures some of the price drop).

Supply growth:

| Factor | What we're seeing | 2026–2028 multiplier |

|---|---|---|

| Inference capacity buildout (GPUs, chips, data centers) | TSMC CoWoS packaging capacity expanding ~66% in 2026; data center capacity doubling by 2030 | ~5–10x |

Net picture:

| Low estimate | High estimate | |

|---|---|---|

| Raw demand (adoption × Jevons × agentic) | 50x | 270x |

| Efficiency offset | ÷ 5x | ÷ 3x |

| Net demand | 10x | 90x |

| Supply growth | 5x | 10x |

The low end roughly balances. The high end doesn't come close. These are napkin numbers, not a forecast, but they illustrate why even aggressive supply buildout and real model efficiency improvements may not be enough. The gap is most likely to show up as periodic crunches for frontier models and in GPU-constrained regions.

We've seen this before

When infrastructure supply tightens, you don't just get higher prices. You get hard limits. Enterprises waited months for GPU clusters during the 2023–2025 shortage. Auto manufacturers halted production lines during the 2020–2022 chip crunch. DRAM shortages have repeatedly triggered rationing for smaller buyers.

The AI supply chain has the same structural characteristics: long fab lead times, limited facilities, specialized components like HBM. Once demand exceeds supply, availability gets uneven fast.

When inference efficiency becomes more than a cost problem

Most companies treat inference efficiency as a cost problem. Reduce the bill, improve margins. Fair enough. But when capacity tightens, the problem changes shape. It stops being about the bill and starts being about whether the feature works at all.

Rate limits from your model provider. Latency that makes the product feel broken. Getting bounced to a weaker model because the one you need is oversubscribed. Teams are already running into this today, and it'll get more common.

Now picture two competitors in the same market with the same AI capabilities. One burns 40% fewer tokens per interaction because they thought about prompt design and model selection. When capacity gets tight, that team serves 40% more users on the same allocation. That's not a cost savings story. That's a "we can ship and you can't" story.

How to reduce LLM token usage without losing capability

There are real, proven ways to reduce inference consumption without giving up capability. Model routing, prompt caching, output constraints, structured responses, batching. Teams applying these patterns cut token usage by 30 to 50 percent while maintaining quality. We've written detailed guides for OpenAI and Anthropic Claude that walk through the specifics.

Most teams haven't bothered yet because tokens were cheap and available. That calculus is changing.

Where this is headed

For the past decade, cloud engineering has mostly been about cost optimization. The next chapter may be about compute efficiency under constraint.

If inference capacity becomes the new scarce resource, and there are structural reasons to think it will, then the companies that understand and control their inference usage will have a real advantage. Not just lower bills. More users served, more agent tasks completed, more product functionality delivered on the same infrastructure footprint.

At Frugal, this is the problem we're built to solve. We find the wasteful inference patterns hiding in your code, the ones that burn tokens and capacity for no good reason, and surface Frugal Fixes to eliminate them.

Turns out, the best time to get efficient is before scarcity makes it urgent.